I refer to my Google Scholar profile for a list of my publications that is up-to-date: Publications at Google Scholar

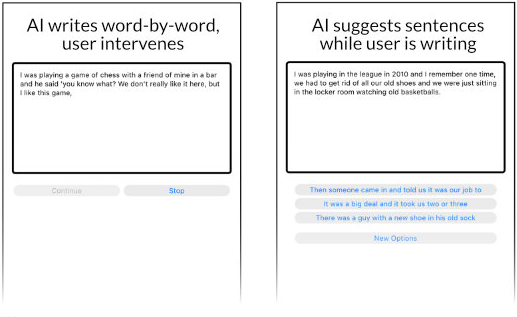

Suggestion Lists vs. Continuous Generation: Interaction Design for Writing with Generative Models on Mobile Devices Affect Text Length, Wording and Perceived Authorship

In: Proceedings of Mensch und Computer 2022, ACM Press

Paper: Download PDF - DOI: www.doi.org/10.1145/3543758.3543947

Abstract:

Neural language models have the potential to support human writing. However, questions remain on their integration and influence on writing and output. To address this, we designed and compared two user interfaces for writing with AI on mobile devices, which manipulate levels of initiative and control: 1) Writing with continuously generated text, the AI adds text word-by-word and user steers. 2) Writing with suggestions, the AI suggests phrases and user selects from a list. In a supervised online study (N=18), participants used these prototypes and a baseline without AI. We collected touch interactions, ratings on inspiration and authorship, and interview data. With AI suggestions, people wrote less actively, yet felt they were the author. Continuously generated text reduced this perceived authorship, yet increased editing behavior. In both designs, AI increased text length and was perceived to influence wording. Our findings add new empirical evidence on the impact of UI design decisions on user experience and output with co-creative systems.

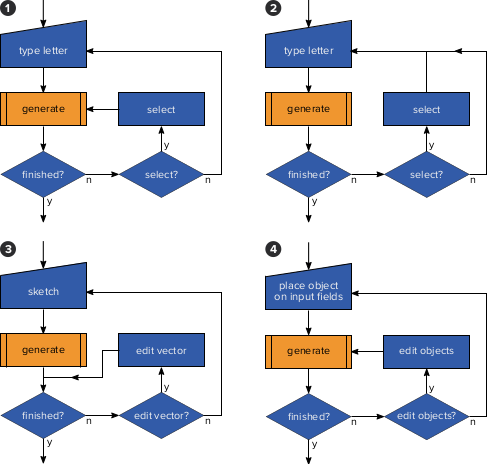

Examining Autocompletion as a Basic Concept for Interaction with Generative AI

In: i-com, vol. 19, no. 3, 2020, pp. 251-264.

Paper: Download PDF (Author Version) - DOI: www.doi.org/10.1515/icom-2020-0025

Abstract:

Autocompletion is an approach that extends and continues partial user input. We propose to interpret autocompletion as a basic interaction concept in human-AI interaction. We first describe the concept of autocompletion and dissect its user interface and interaction elements, using the well-established textual autocompletion in search engines as an example. We then highlight how these elements reoccur in other application domains, such as code completion, GUI sketching, and layouting. This comparison and transfer highlights an inherent role of such intelligent systems to extend and complete user input, in particular useful for designing interactions with and for generative AI. We reflect on and discuss our conceptual analysis of autocompletion to provide inspiration and a conceptual lens on current challenges in designing for human-AI interaction.

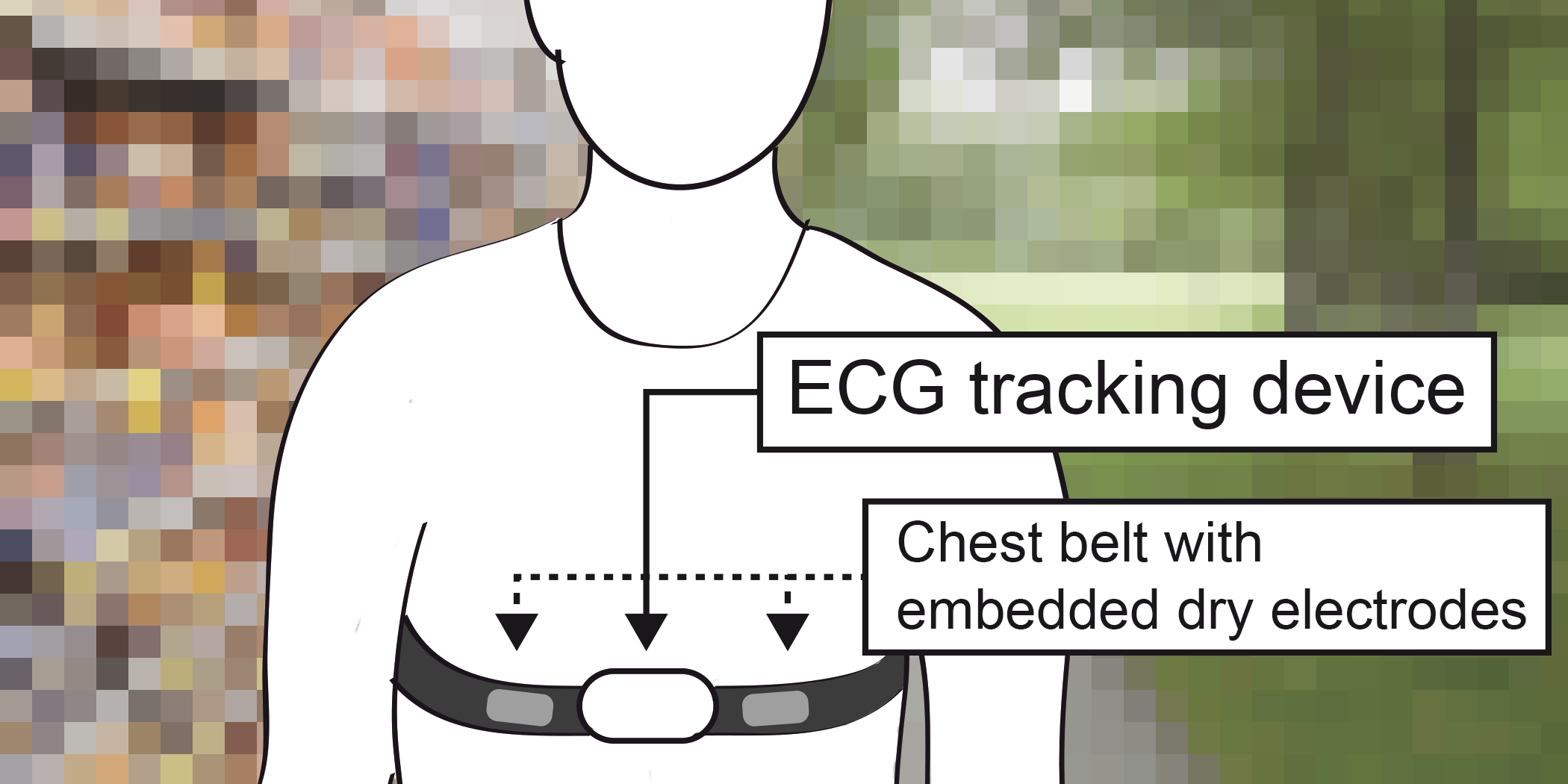

Heartbeats in the Wild: A Field Study Exploring ECG Biometrics in Everyday Life

In: Proc. of the 2020 CHI Conference on Human Factors in Computing Systems, ACM Press

Paper: Download PDF - DOI: www.doi.org/10.1145/3313831.3376536

Abstract:

This paper reports on an in-depth study of electrocardiogram (ECG) biometrics in everyday life. We collected ECG data from 20 people over a week, using a non-medical chest tracker. We evaluated user identification accuracy in several scenarios and observed equal error rates of 9.15% to 21.91%, heavily depending on 1) the number of days used for training, and 2) the number of heartbeats used per identification decision. We conclude that ECG biometrics can work in the wild but are less robust than expected based on the literature, highlighting that previous lab studies obtained highly optimistic results with regard to real life deployments. We explain this with noise due to changing body postures and states as well as interrupted measures. We conclude with implications for future research and the design of ECG biometrics systems for real world deployments, including critical reflections on privacy.

ECG Biometrics Code Repository:

We would like to share our code for research purpose! If you are interested in utilizing our codebase, contact me (via the contact form on this website, or my academic email). I am then going to grant you access to our respository on gitlab.com.

How to Hold Your Phone When Tapping: A Comparative Study of Performance, Precision, and Errors

In: Proc. of the ACM International Conference on Interactive Surfaces and Spaces (ISS), ACM Press, pp. 115-127.

Paper: Download PDF - DOI: www.doi.org/10.1145/3279778.3279791

Abstract:

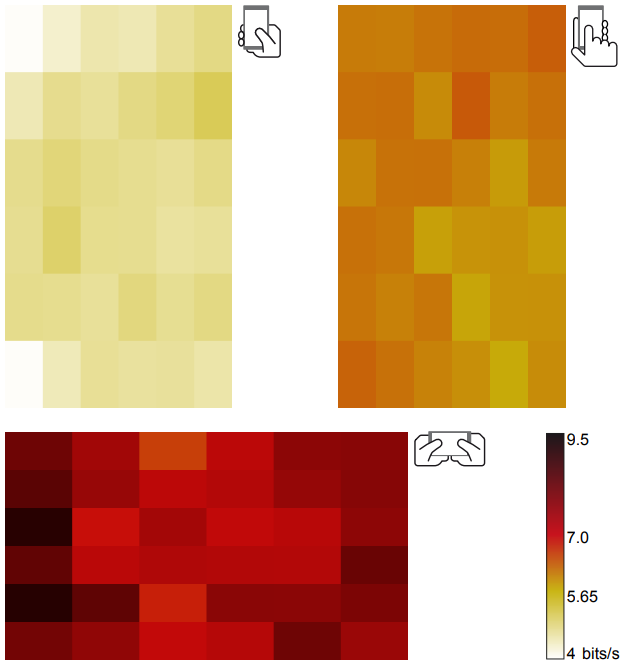

We argue that future mobile interfaces should differentiate between various contextual factors like grip and active fingers, adjusting screen elements and behaviors automatically, thus moving from merely responsive design to responsive interaction. Toward this end we conducted a systematic study of screen taps on a mobile device to find out how the way you hold your device impacts performance, precision, and error rate. In our study, we compared three commonly used grips and found that the popular one-handed grip, tapping with the thumb, yields the worst performance. The two-handed grip, tapping with the index finger, is the most precise and least error-prone method, especially in the upper and left halves of the screen. In landscape orientation (two-handed, tapping with both thumbs) we found the best overall performance with a drop in performance in the middle of the screen. Additionally, we found differentiated trade-off relationships and directional effects. From our findings we derive design recommendations for interface designers and give an example how to make interactions truly responsive to the context-of-use.

Digital Footprints of Sensation Seeking: A Traditional Concept in the Big Data Era

In: Zeitschrift für Psychologie.

Abstract:

The increasing usage of new technologies implies changes for personality research. First, human behavior becomes measurable by digital data, and second, digital manifestations to some extent replace conventional behavior in the analog world. This offers the opportunity to investigate personality traits by means of digital footprints. In this context, the investigation of the personality trait sensation seeking attracted our attention as objective behavioral correlates have been missing so far. By collecting behavioral markers (e.g., communication or app usage) via Android smartphones, we examined whether self-reported sensation seeking scores can be reliably predicted. Overall, 260 subjects participated in our 30-day real-life data logging study. Using a machine learning approach, we evaluated cross-validated model fit based on how accurate sensation seeking scores can be predicted in unseen samples. Our findings highlight the potential of mobile sensing techniques in personality research and show exemplarily how prediction approaches can help to foster an increased understanding of human behavior.

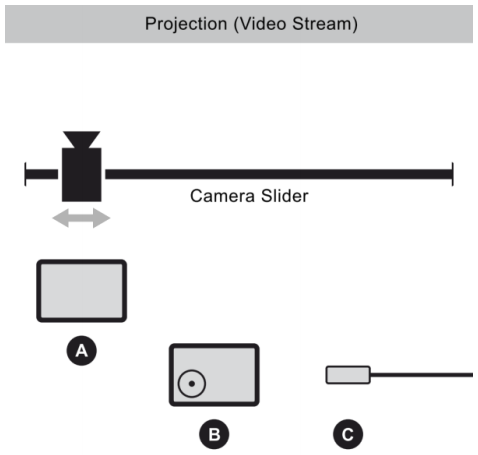

Towards an Evaluation Framework: Implicit Evaluation of Sense of Agency in a Creative Continuous Control Task

In: Proc. of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems, ACM Press, pp. 1686-1693.

Paper: Download PDF - DOI: www.doi.org/10.1145/3027063.3053072

Abstract:

Reducing workload in a UI while still letting users feel in control is not trivial. This workload/control tradeoff is described in the literature and deserves attention in design practice. However, there is no evaluation framework for it supporting both explicit and implicit measurement, mainly because measuring sense of control implicitly is just difficult. A recently proposed implicit evaluation methodology - measuring sense of agency via interval estimation - seems promising and calls for further investigation. We studied its feasibility in a continuous control task - cinematographic camera motion - and compared a multi-touch software joystick to a mid-air gesture UI (N=8). Data was collected both explicitly and implicitly. Our results suggest that the mid-air gesture design does not increase the sense of agency. Both methodologies yielded similar results but the implicit one was more sensitive and the combination of both led to convincing overall results.